Taming the Chaos using Cat-a-lot

Turned 6 years of fragmented feedback into a shipped cross-tool workflow used by 2,000+ Wikimedia Commons editors.

Cat-a-lot (Wikimedia Coolest Tool Award 2024) lets contributors bulk-categorize thousands of media files on Wikimedia Commons. Power users, those running hundreds of operations per session, were constantly hitting repeated workflow breakdowns: lost state, an unstable UI, and a tool that couldn't scale beyond medium batches.

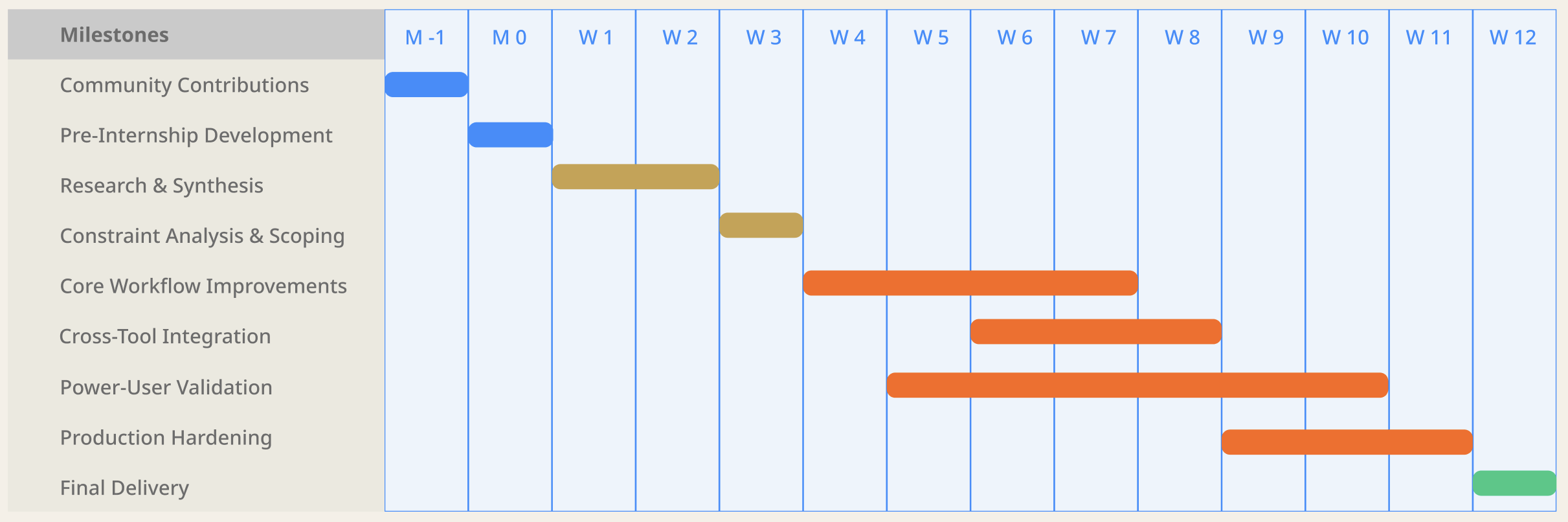

I joined as an Outreachy intern and reframed the work: rather than patching a list of bugs, I diagnosed the underlying workflow failures, made deliberate prioritization decisions, and redesigned both the tool and how it connected to the broader ecosystem.

The Problem

Fragmented feedback hiding systemic friction

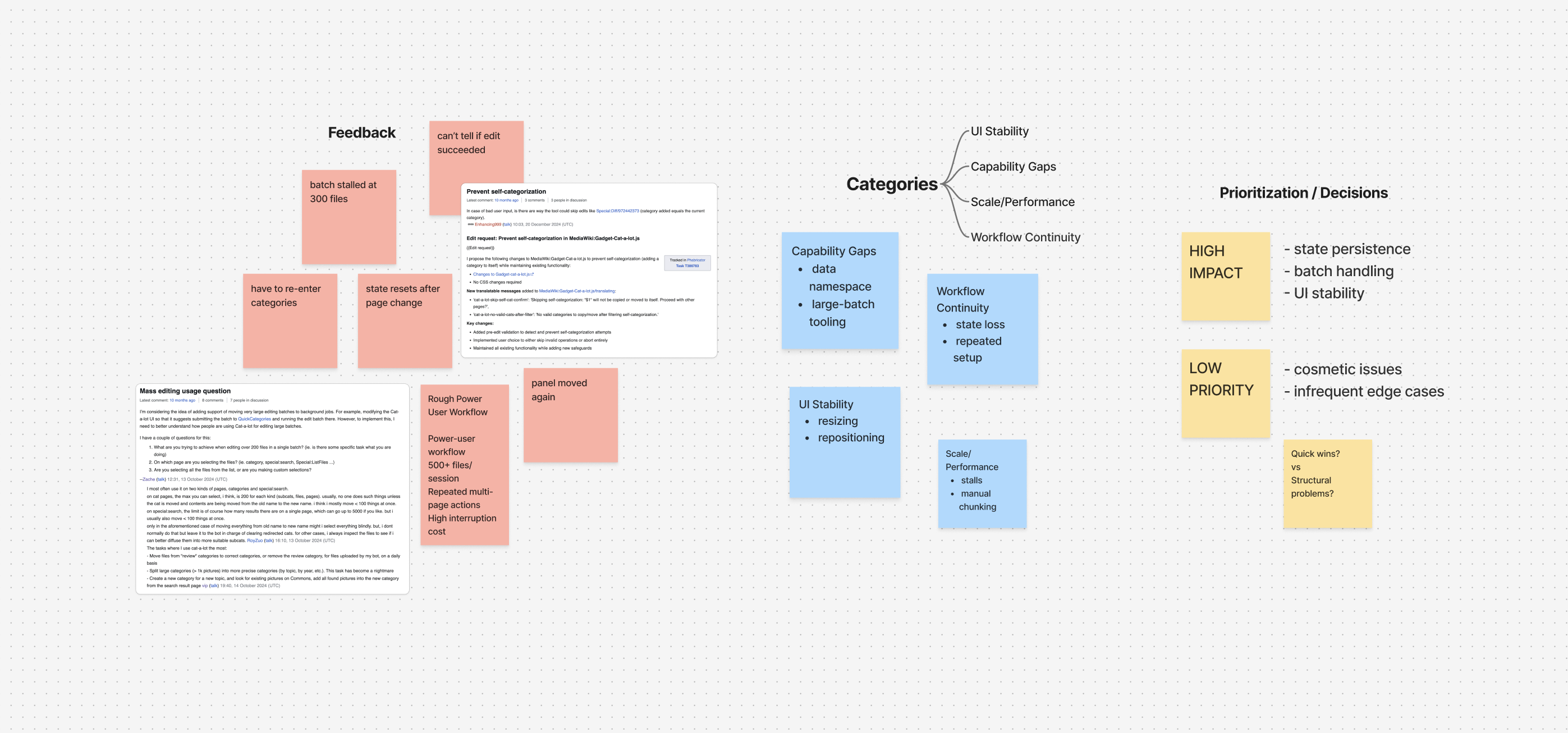

Feedback was scattered across Cat-a-lot's talk page, Phabricator tasks, and community discussion threads — spanning 2019 to 2025. My first move was treating all of it as a single dataset rather than triaging issues individually.

After consolidating and clustering feedback, four clear failure modes emerged:

State loss on every page turn: Contributors re-entered search queries and category inputs repeatedly across multi-page sessions, the highest-frequency disruption for regular users.

Unpredictable UI behaviour: The panel resized, repositioned, or reset mid-session with no clear trigger, making extended use exhausting.

Performance ceiling at scale: Beyond a medium batch size, operations slowed, stalled, or required manual splitting into chunks.

Opaque action feedback: Users couldn't reliably tell whether an operation succeeded, partially completed, or failed silently.

Key Insight

The real constraint wasn't the UI, it was the system.

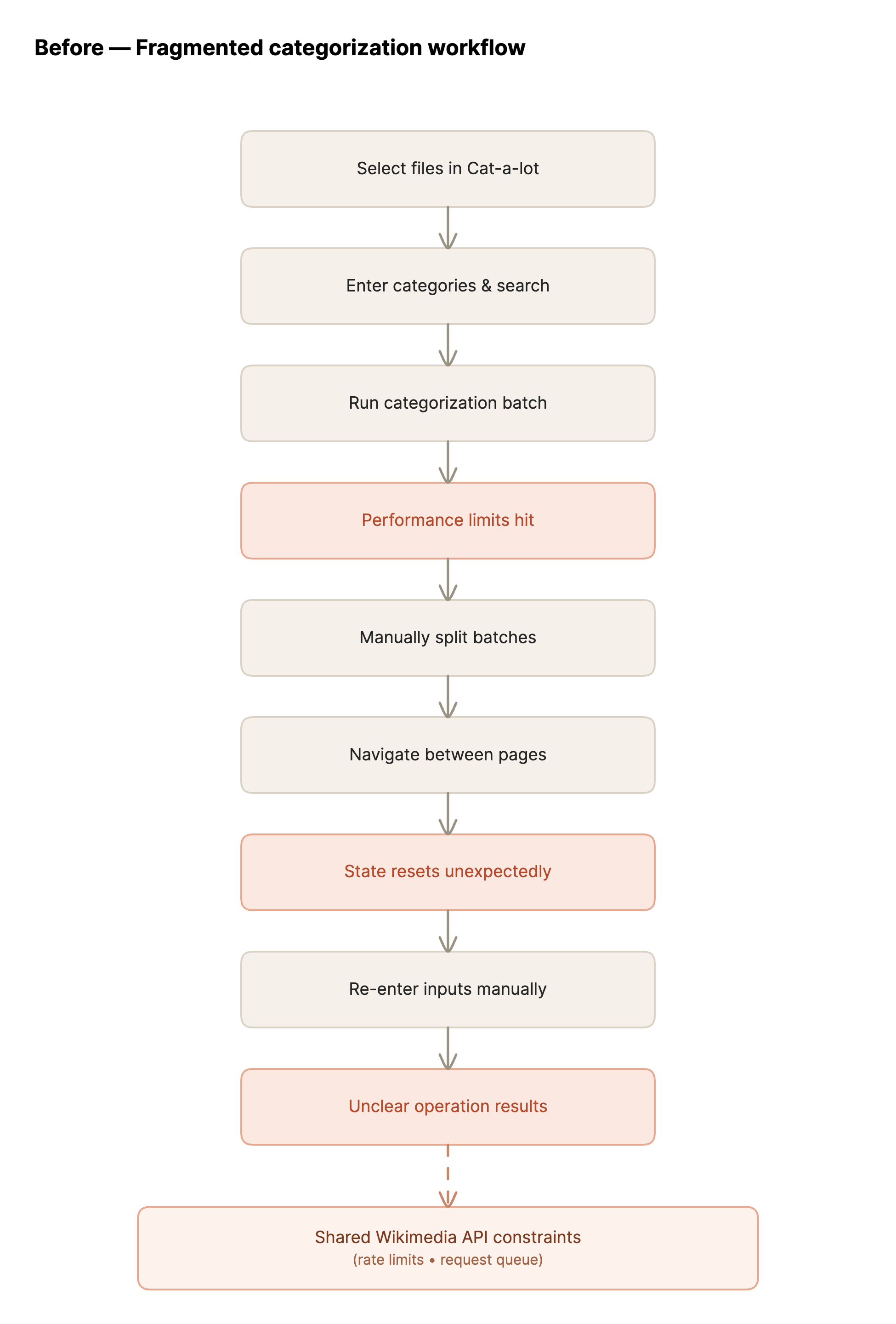

All Wikimedia gadgets share a single API module that queues requests, enforces rate limits, and protects system stability. This is intentional at Wikimedia's scale. But it also means Cat-a-lot has a hard ceiling on batch throughput it can handle on its own and with consistency.

That realization shifted the question from "How do I fix this tool?" to "How do I design user workflows that scale within platform constraints?" Solving it required thinking across tools, not just within one.

Approach

Method

Structured the problem before writing a line of code using the following approach

01 - Aggregate before prioritizing Pulled every piece of feedback into a single view. The goal was pattern recognition, not issue-by-issue triage.

02 - Cluster by workflow failure, not symptom Grouped issues into four themes: workflow continuity, UI stability, scale/performance, and capability gaps.

03 - Weight by power-user impact Deprioritized issues that only affected occasional users. Prioritized anything that compounded across repeated high-frequency use.

04 - Separate quick wins from structural problems Surface-level fixes could be merged quickly. Scaling required designing across tools rather than over-engineering one.

Key Decisions & Trade-offs

Abandon the jQuery → Codex migration

After an in-depth feasibility study, I found that Codex, Wikimedia's new design system, lacked the adoption breadth to make this viable within the internship timeline. I made the call to redirect scope to improvements that would ship.

Gained

Shipped real, usable improvements within 3 months

Accepted

No design system modernization in this cycle

Don't touch core infrastructure

The shared Wikimedia API module required cross-team coordination on timelines that didn't fit a 3-month internship. Working around it, not through it, was the right call.

Gained

Delivered within scope and timeline

Accepted

No direct API performance gains

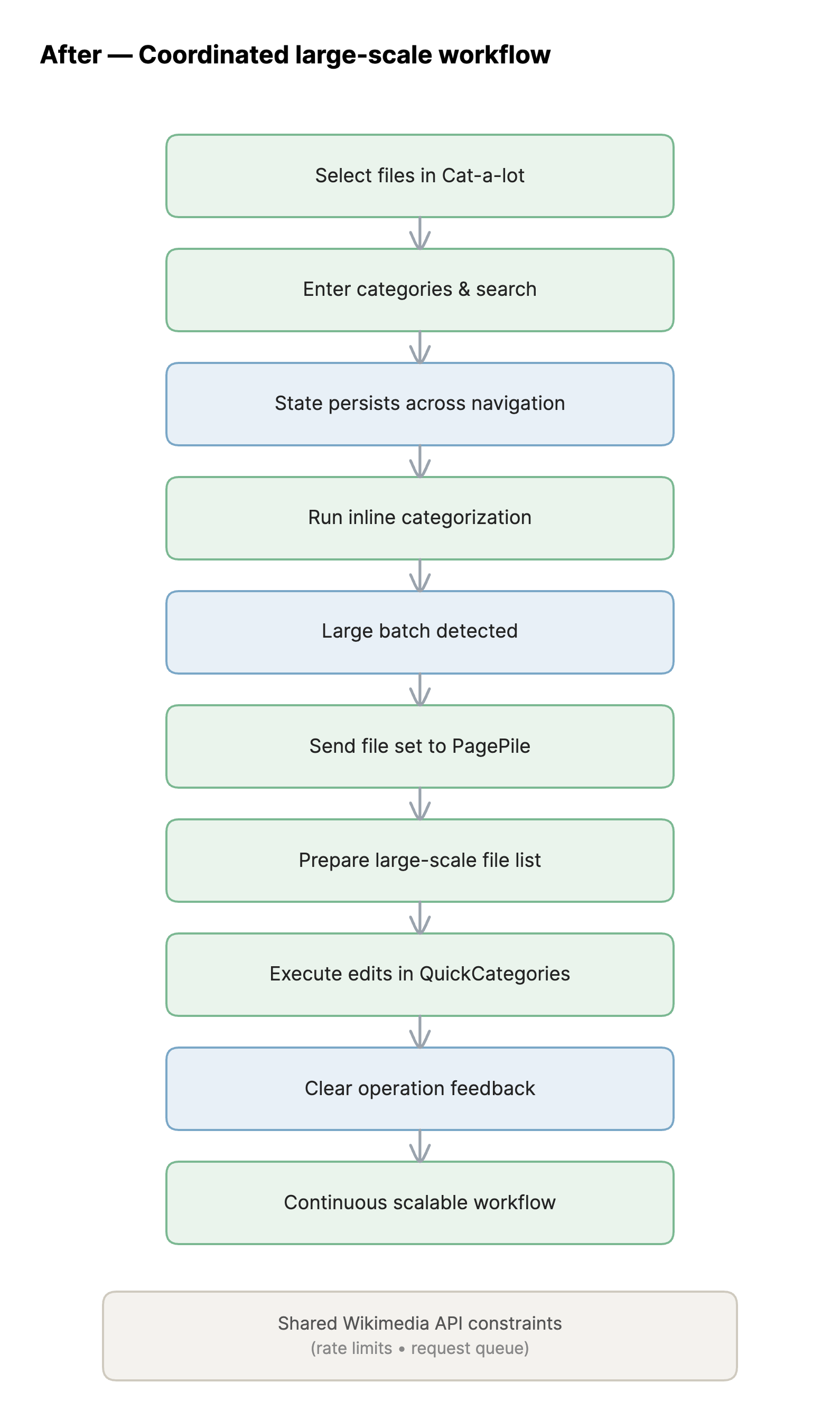

Integrate tools, don't extend the tool

Cat-a-lot wasn't architected for very large batch ops. PagePile and QuickCategories already solved parts of the problem. Integration let each tool do what it was designed for.

Gained

Scalable workflow with modular components

Accepted

More cross-tool coordination required

Workflow continuity over new features

Users were abandoning sessions due to state loss and UI instability. Adding features on a broken foundation would increase complexity without improving experience.

Gained

Stable, reliable core workflows

Accepted

Feature roadmap deferred

Solution & Execution

Workflow Continuity: Persisted selected categories and search inputs across page navigation, eliminating the most frequent point of re-entry. Maintained panel open/closed state. Added navigation warnings when active selections would be lost.

UI Stability: Resolved panel resizing and repositioning bugs. Improved result messaging so users could distinguish success, partial completion, and failure.

Capability Expansion: Added Data namespace support. Wrote developer documentation covering architecture, testing workflow, and contribution guidelines.

Cross-Codebase Fix: During integration testing, I discovered QuickCategories was missing API parameters required for the pipeline to function. I read the codebase directly, identified the missing handling, implemented the fix, and got it reviewed and merged — unblocking the entire integration.

System-Level Thinking

For large-scale operations, improving Cat-a-lot in isolation had a hard ceiling. The solution required redesigning the workflow across tools. The cross-codebase fix was the unlock, QuickCategories had no handling for the API parameters needed, and no documentation flagging the gap. Without that fix, the entire cross-tool workflow would have been blocked.

Validation

A beta version was published as a user script. To reach power users, I posted to Cat-a-lot's talk page and Wikimedia Commons' Village Pump, and asked my mentor to reach out to known power users directly.

Most testers were non-technical meaning they use the tool, not the code. My process was to receive feedback, translate it into underlying workflow failures, re-cluster it against the original four failure modes, and prioritize fixes accordingly.

What structured testing surfaced:

- UI instability under repeated use that didn't appear in single-session testing

- Edge cases in batch operations triggered only at high volume

- Workflow gaps between tools that seemed fine in isolation but broke in sequence

Impact

What I'd Do Next

1. Unify the cross-tool workflow The pipeline works, but requires manual coordination at each handoff. The next step is surfacing the flow directly inside Cat-a-lot's interface, a guided handoff mode that detects when a selection exceeds inline batch capacity and offers to send it to PagePile automatically.

2. Instrument long-running operations Large batch operations give users no signal about progress. Adding a percentage indicator would reduce abandonment and unlock better understanding of where operations fail.

3. Persistent, resumable workflows Current state persistence improvements are session-scoped. For contributors managing very large categorization projects across multiple sessions, the tool still forces restart. This requires a persistence layer that doesn't exist yet, right item to sequence last.